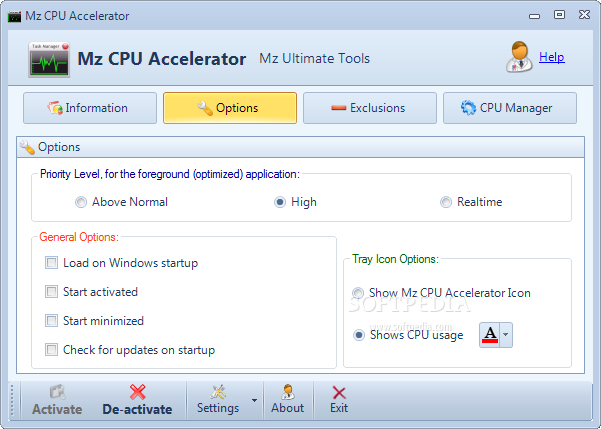

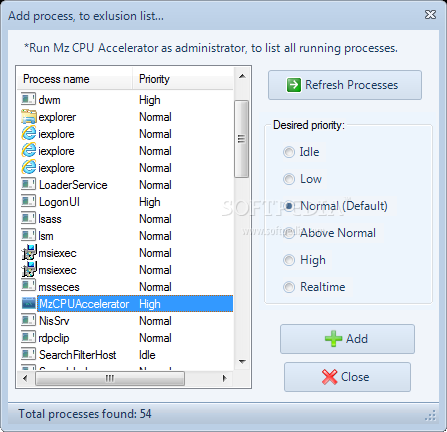

Configuring PotPlayer for GPU-accelerated video playback with DirectX Video Acceleration (DXVA), Compute Unified Device Architecture (CUDA) or high-performance software decoding

CPU vs. GPU vs. TPU | Complete Overview And The Difference Between CPU, GPU, and TPU-C&T Solution Inc. | 智愛科技股份有限公司

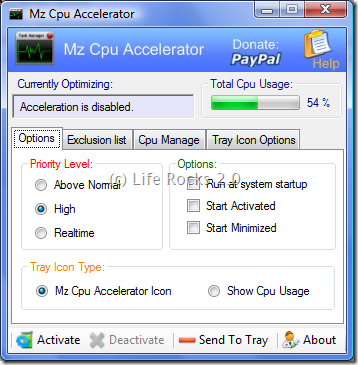

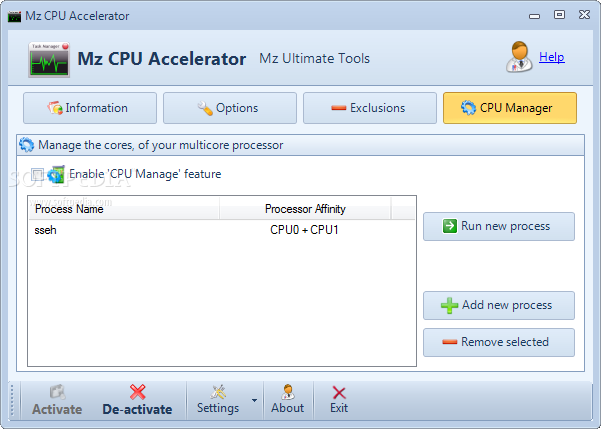

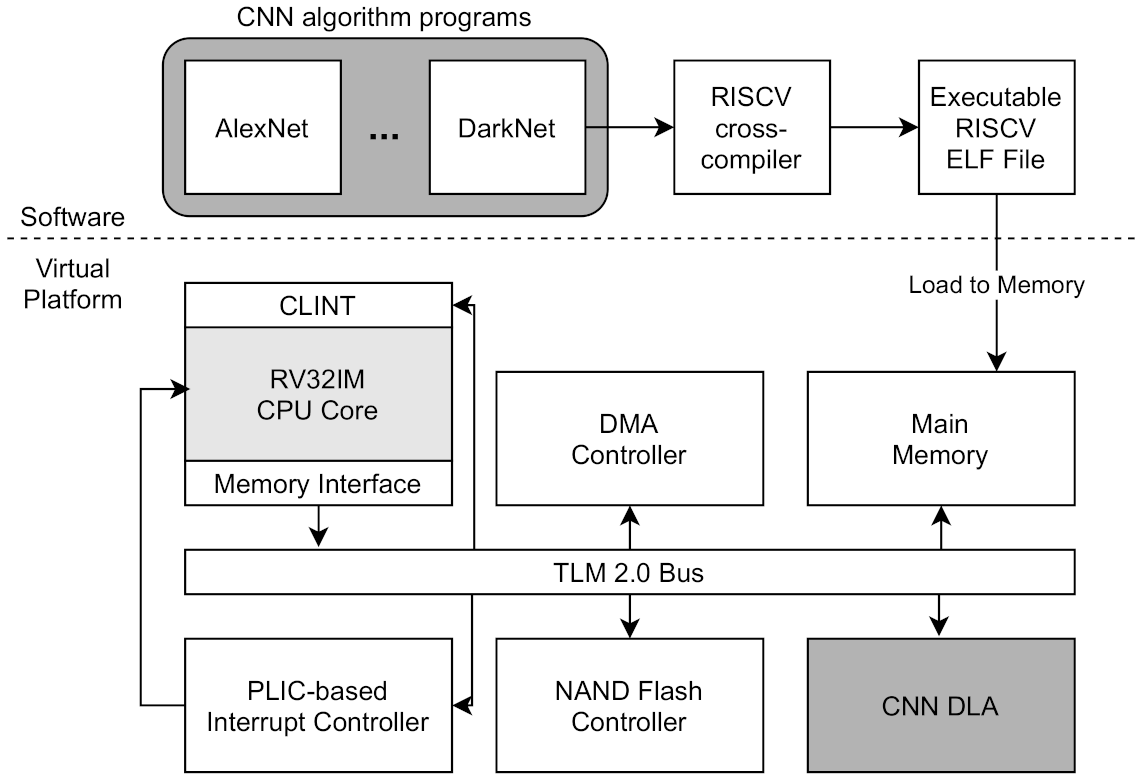

Electronics | Free Full-Text | RISC-V Virtual Platform-Based Convolutional Neural Network Accelerator Implemented in SystemC

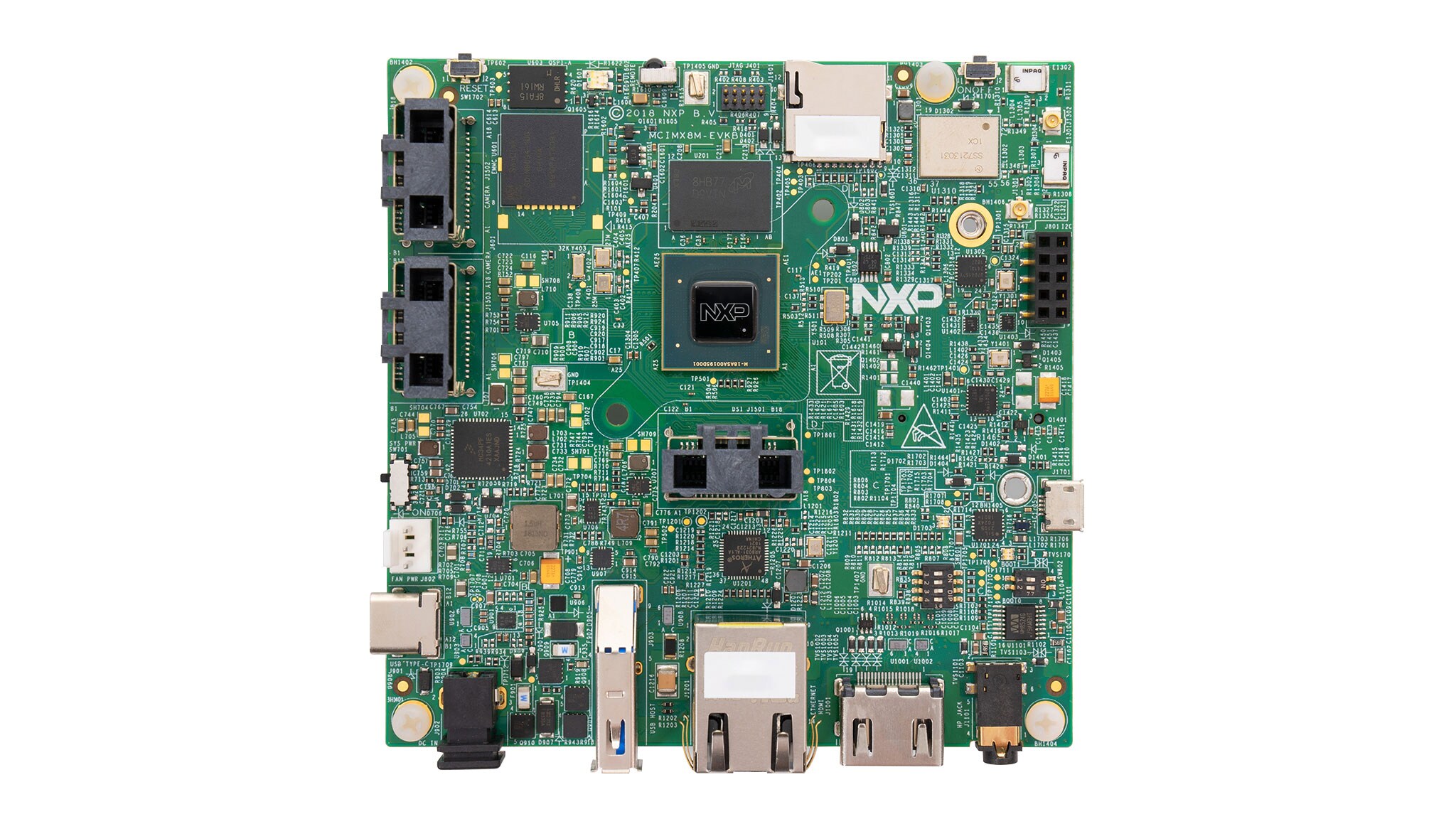

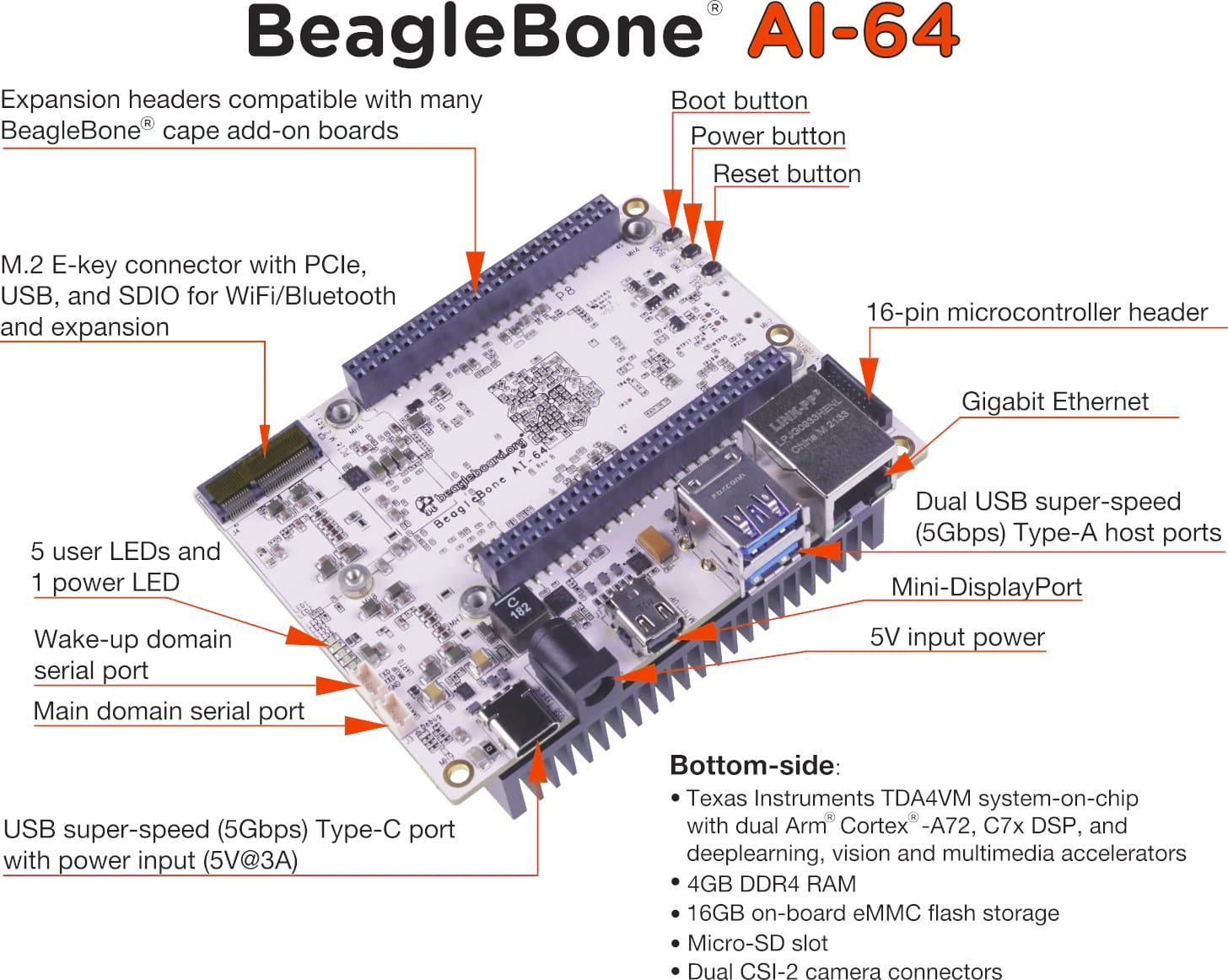

BeagleBone AI-64 SBC features TI TDA4VM Cortex-A72/R5F SoC with 8 TOPS AI accelerator - CNX Software

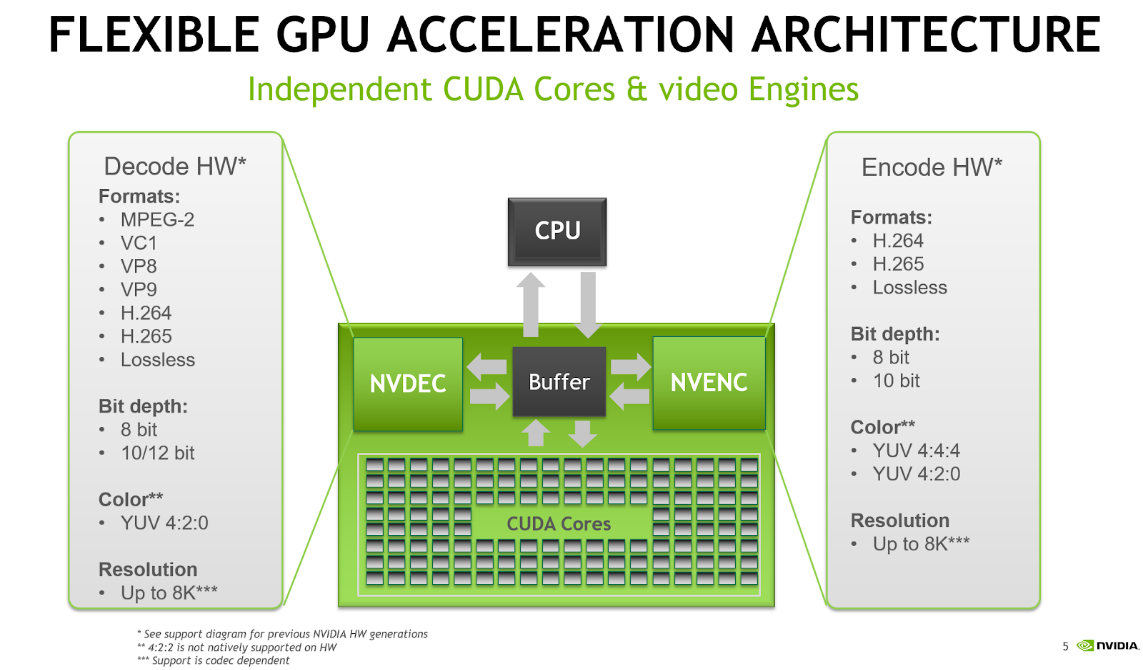

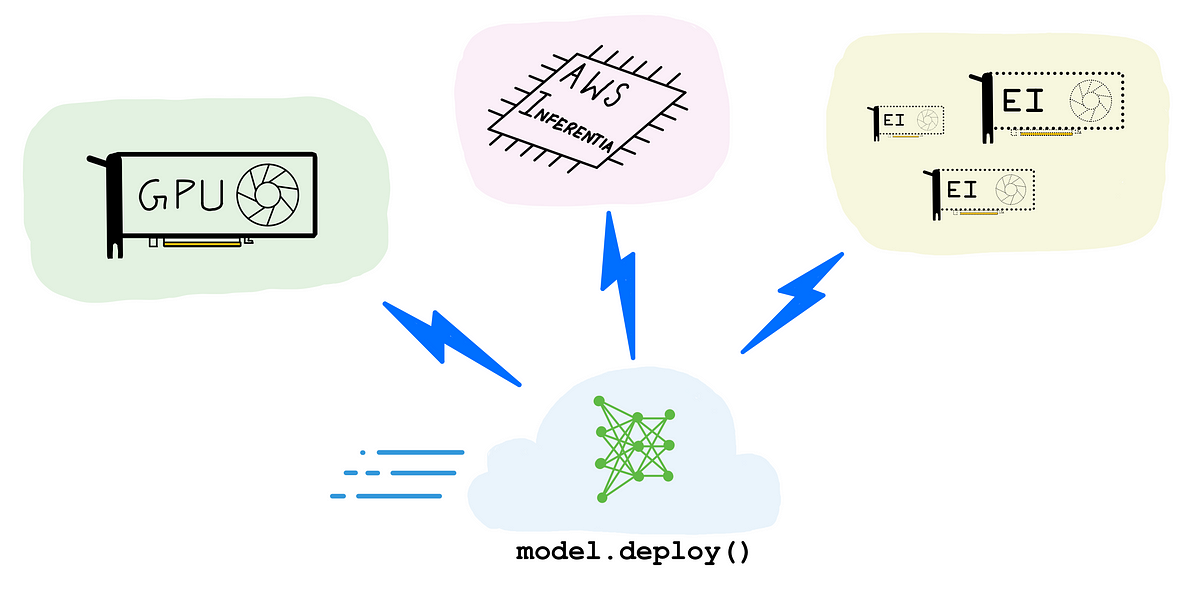

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science

Intel Optane Memory - M.2 2280 16GB PCIe NVMe 3.0 x2 Memory Module/System Accelerator - MEMPEK1W016GAXT - Newegg.com